The Icelandic Sagas tell of Erik the Red: exiled for murder in the late 10th century he fled to southwest Greenland, establishing its first Norse settlement.

The colony took root, and by the mid-12th century there were two major settlements with a population of thousands. Greenland even gained its own bishop.

By the end of the 15th century, however, the Norse of Greenland had vanished – leaving only abandoned ruins and an enduring mystery.

Past theories as to why these communities collapsed include a change in climate and a hubristic adherence to failing farming techniques.

Some have suggested that trading commodities – most notably walrus tusks – with Europe may have been vital to sustaining the Greenlanders. Ornate items including crucifixes and chess pieces were fashioned from walrus ivory by craftsmen of the age. However, the source of this ivory has never been empirically established.

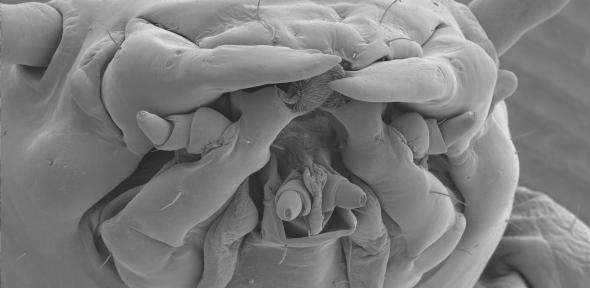

Now, researchers from the universities of Cambridge and Oslo have studied ancient DNA from offcuts of tusks and skulls, most found on the sites of former ivory workshops across Europe, in order to trace the origin of the animals used in the medieval trade.

In doing so they have discovered an evolutionary split in the walrus, and revealed that the Greenland colonies may have had a “near monopoly” on the supply of ivory to Western Europe for over two hundred years.

For the latest study, published today in the journal Proceedings of the Royal Society B, the research team analysed walrus samples found in several medieval trading centres – Trondheim, Bergen, Oslo, Dublin, London, Schleswig and Sigtuna – mostly dating between 900 and 1400 CE.

The DNA showed that, during the last Ice Age, the Atlantic walrus divided into two ancestral lines, which researchers term “eastern” and “western”. Walruses of the eastern lineage are widespread across much of the Arctic, including Scandinavia. Those of the western, however, are unique to the waters between western Greenland and Canada.

Finds from the early years of the ivory trade were mostly from the eastern lineage. Yet as demand grew from the 12th century onwards, the research team discovered that Europe’s ivory supply shifted almost exclusively to tusks from the western lineage.

They say that ivory from western linage walruses must have been supplied by the Norse Greenlanders – by hunting and perhaps also by trade with the indigenous peoples of Arctic North America.

“The results suggest that by the 1100s Greenland had become the main supplier of walrus ivory to Western Europe – a near monopoly even,” said Dr James H. Barrett, study co-author from the University of Cambridge’s Department of Archaeology.

“The change in the ivory trade coincides with the flourishing of the Norse settlements on Greenland. The populations grew and elaborate churches were constructed.

“Later Icelandic accounts suggest that in the 1120s, Greenlanders used walrus ivory to secure the right to their own bishopric from the king of Norway. Tusks were also used to pay tithes to the church,” said Barrett.

He points out that the 11th to 13th centuries were a time of demographic and economic boom in Europe, with growing demand from urban centres and the elite served by transporting commodities from increasingly distant sources.

“The demands for luxury goods produced from ivory may have helped the far-flung Norse communities in Greenland survive for centuries,” said Barrett.

Co-author Dr Sanne Boessenkool of the University of Oslo said: “We knew from the start that analysing ancient DNA would have the potential for new historical insights, but the findings proved to be particularly spectacular.”

The new study tells us less about the end of the Greenland colonies, say Barrett and colleagues. However, they note that it is hard to find evidence of walrus ivory imports to Europe that date after 1400.

Elephant ivory eventually became the material of choice for Europe’s artisans. “Changing tastes could have led to a decline in the walrus ivory market of the Middle Ages,” said Barrett.

Ivory exports from Greenland could have stalled for other reasons: over-hunting can cause walrus populations to abandon their coastal “haulouts”; the “Little Ice Age” – a sustained period of lower temperatures – began in the 14th century; the Black Death ravaged Europe.

Whatever caused the cessation of Europe’s trade in walrus ivory, it must have been significant for the end of the Norse Greenlanders,” said Barrett. “An overreliance on a single commodity, the very thing which gave the society its initial resilience, may have also contained the seeds of its vulnerability.”

The heyday of the walrus ivory trade saw the material used for exquisitely carved items during Europe’s Romanesque art period. The church produced much of this, with major ivory workshops in ecclesiastical centres such as Canterbury, UK.

Ivory games were also popular. The Viking board game hnefatafl was often played with walrus ivory pieces, as was chess, with the famous Lewis chessmen among the most stunning examples of Norse carved ivory.

Tusks were exported still attached to the walrus skull and snout, which formed a neat protective package that was broken up at workshops for ivory removal. These remains allowed the study to take place, as DNA extraction from carved artefacts would be far too damaging.

Co-author Dr Bastiaan Star of the University of Oslo said: “Until now, there was no quantitative data to support the story about walrus ivory from Greenland. Walruses could have been hunted in the north of Russia, and perhaps even in Arctic Norway at that time. Our research now proves beyond doubt that much of the ivory traded to Europe during the Middle Ages really did come from Greenland”.

The research was funded by the Leverhulme Trust, Nansenfondet and the Research Council of Norway.

Our aim is to recognise and celebrate the UK’s most exciting impactful businesses – that is, growth businesses that are succeeding financially while also driving positive change for people and/or the planet.

Our aim is to recognise and celebrate the UK’s most exciting impactful businesses – that is, growth businesses that are succeeding financially while also driving positive change for people and/or the planet.